The Slop Economy

The rise of AI slop and what it means for commerce

Most of us can spot it now—the “it's not X, it's Y” phraseology or the words like “quietly” and “operating system” that you never noticed before but suddenly seem to read everywhere. It's commonly referred to as AI slop—the overabundance of certain phrases and constructions in our written communication as more people are using AI to write. The irony is most people complain about AI slop because of the repetition and yet use it to write because it sounds credible and often produces superior results— particularly on social media. This has big implications for the ads that we see and how commerce evolves to engender more trust and authenticity in a world where the content that we consume is increasingly artificial.

AI Slop Is Having a Moment

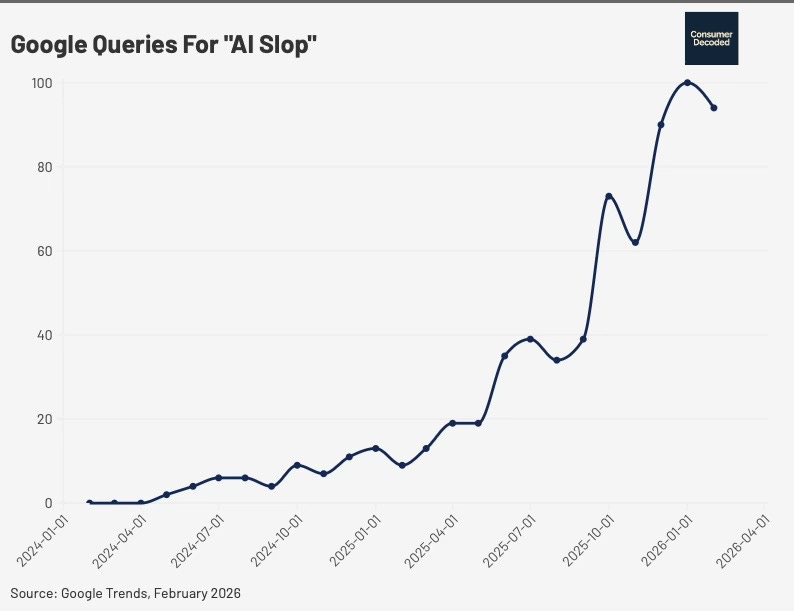

“Slop” was deemed the 2025 Word of the Year by Merriam-Webster. They define it as “digital content of low quality that is produced usually in quantity by means of artificial intelligence”. It’s basically spam’s more polished cousin. The term got big in 2024 and kept accelerating into 2025.

Part of what is fueling the use of the word is the dramatic increase in AI-generated content. As shown in the video below, a study by Graphite found that in November 2024 the number of AI-generated articles on the internet surpassed those written by humans. So, we are seeing a huge increase in the amount and proportion of AI-generated content.

Saturation Breeds Skepticism

Social media is one of the places where AI slop has become most prolific—and consumers are unhappy about it. According to a recent CNET study, 94% of social media users in the US believe that they encounter content generated by AI. According to an early February article from the BBC, “Sometimes the number of likes for the AI backlash comments far exceed the original post.”

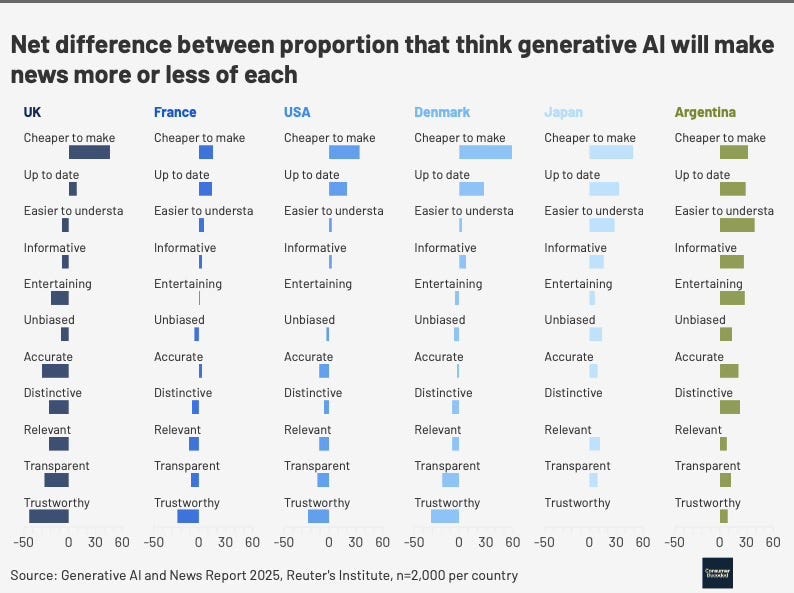

Even in the news media, consumers believe that AI is being used and generally believe that it would not benefit them. In a recent poll from Reuters, in four out of six countries, readers believed that generative AI will make the news less distinctive, less relevant, less transparent, and less trustworthy. In the UK, the only positive benefits that readers anticipate from generative AI are that it will make the news more up-to-date and cheaper to make.

Trust in the Age of Content Farms

While consumers are pushing back on AI-generated content, brands are accelerating its use. As brands seek to improve their discoverability on agent platforms like ChatGPT and Gemini, they are turning to strategies that increase content production—across both traditional advertising and editorial. One technique is the development of “content farms” that leverage AI to mass-generate content designed to elevate a brand in specific research queries.

This is resulting in a dramatic increase in AI-generated advertising content. According to Adobe’s study of 750 retail executives, 92% said that they were using AI to enhance product descriptions or images. In addition, Adobe found that 86% of creators are using generative AI for content generation.

But, while this increases volume, it does not necessarily increase human engagement. According to a 2024 NIQ study, consumers found AI-generated ads “confusing, boring and annoying”. The same study found that AI-generated ads elicited weaker memory activation in the brain.

The Trust Premium

So, if the agent platform is pulling content from editorial, Reddit and others and that content is increasingly being developed by AI, should we trust it? Many consumers say no and are demanding increased labelling of content. In an environment where content is cheap and volume explodes, trust and curation should command a premium. There are many solutions currently under discussion, ranging from content certification and watermarking to other content verification labels. So far, most of what exists are means for a content developer to self-identify if they have used AI in the creation of their content, but what does that really mean? While some creators use AI for every step of their workflow, including idea generation and writing, others use it only for graphics or editing—does that count?

Summary

We are living in an era of increasing AI-generated content—whether that is a LinkedIn post, TikTok video, product review or a major brand advertisement. While this trend is being embraced by everyone from individual creators to large brands, consumers see little tangible benefit from the trend. What’s more, in a world where agents are in charge of more portions of a consumer’s shop—shifting from product recommendations to completed purchases—how do we trust what is being recommended? Solutions are evolving and, in the future, we may see more clearer markings of authentic content. We are entering an era where authenticity will be a premium currency.

Consumer Decoded AI Policy

For clarity, Consumer Decoded uses AI to synthesize large volumes of information, pressure-test ideas, explore counterarguments, and analyze data patterns. Every framework, argument, and point of view is developed, structured, and edited by me and two human editors—Sydney Black and Jay White (yes, my editing is black and white). All arguments, frameworks, and conclusions reflect my own analysis and editorial judgment.

Interesting Reading

“AI ‘slop’ is transforming social media - and a backlash is brewing”, BBC, February 4, 2026.

“How to Create An Equal Household,” The Atlantic, February 26, 2026.

“X is Drowning in Misinformation Following US and Isreal’s Attack on Iran,” Wired, February 28, 2026.

The irony you identify is the exact thing I ran into building AI experiments daily: people who complain about AI slop are often producing it, because the output looks fine until you ask whether it reveals anything interesting.

AI slop isn't a model failure - it's a direction failure. The model executes competently on whatever you give it. If you give it vague instructions, you get fluent generic content. The saturation-breeds-skepticism dynamic you describe in commerce shows up in builder output too: undirected AI produces unit converters and word counters.

The model had the capability for something better; the direction just wasn't there. Wrote about this gap from the experiment side: https://thoughts.jock.pl/p/directed-ai-experiments-vibe-business

Referring to your first illustration, "Google Queries for 'AI Slop,'" I am curious about what appears to be a roughly 3-month periodicity in the upward-trending data. The trend is understandable, but, to me at least, the seemingly periodic behavior is not.